AI Likeness Detection: Key points at glance

- Access Expansion: The software is now accessible to talent agencies, management firms, and the celebrities that they represent.

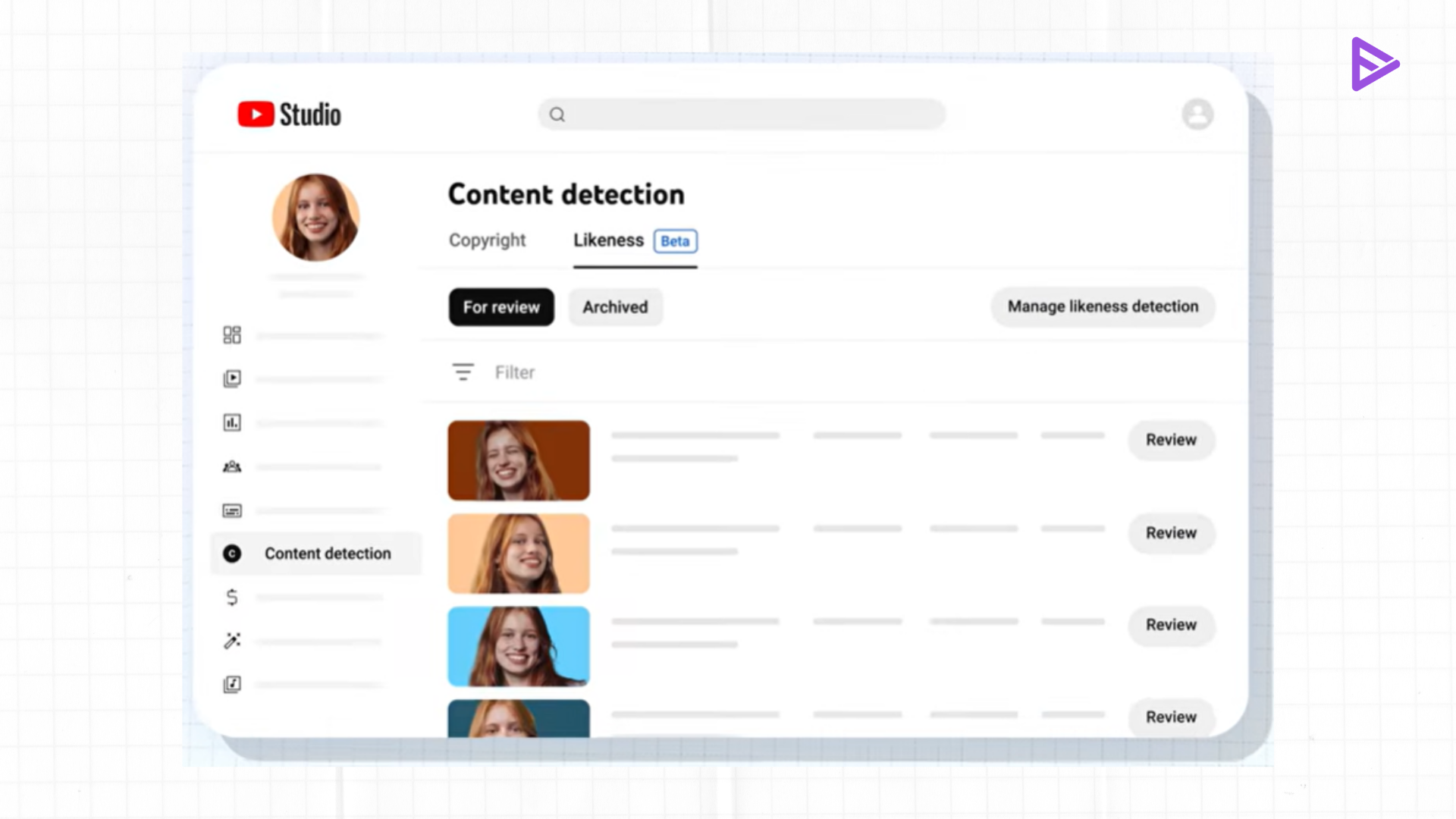

- Deepfake Detection: The AI likeness detection is built to detect artificial content, including deepfakes of a user’s face, and report it.

- Removal Requests: In a similar fashion to how Content ID works with songs and videos, the performers may now locate their AI content and ask for its removal.

- No Channel Needed: The celebrities are eligible for this software despite not posting any content on their YouTube channels.

- Industry Partnership: Leading talent agencies such as Creative Artists Agency (CAA), United Talent Agency (UTA), William Morris Endeavor (WME), and Untitled Management collaborated in perfecting the software.

As AI becomes capable of mimicking a person’s voice and even their face with great accuracy. The entertainment industry is facing another form of cyberattack. Deepfakes, which are AI-generated videos that feature someone else speaking or doing something. Posing a serious risk to actors themselves, not to mention their agencies. As such, YouTube has rolled out a big update to its likeness detection tools.

YouTube can achieve this by collaborating with leading talent management firms. Such as Creative Artists Agency (CAA), United Talent Agency (UTA), and William Morris Endeavor Entertainment (WME). This program enables celebrities and entertainment stars to keep track of their images on the website, regardless of whether they have their own channels.

Protecting the Stars in the Age of AI

The development of AI-based facial recognition represents a breakthrough regarding online safety in the entertainment industry. Traditionally, the sector used “Content ID” to ensure that its copyrighted material is safeguarded. However, preserving a person’s physical appearance and voice are even more complex issues. In making sure that celebrities have the same technology applied to films or music, YouTube recognizes the value and sensitivity of a person’s identity.

As far as I am concerned, it is an inevitable step that social media sites need to take. The increase in deepfakes is not only related to the spread of false information but also to the “digital consent” of the performers themselves. Though most fans use artificial intelligence to create parodies, the risk of using it to cause harm is very high. It would be wise for the agencies to become the “digital bodyguards” of their clients.

Final Thoughts

With the continued development of AI, our methods of regulating its use must also evolve. The recent change made by YouTube reflects the company’s dedication to “Social Responsibility” by placing an emphasis on the welfare of those individuals at the core of the entertainment sector. By ensuring that talent and their management can identify and remove any deepfake videos without consent, YouTube is contributing to a safer digital ecosystem.